Automated Market Research Tools: Power, Pitfalls and Who Really Wins

Welcome to the wild frontier of automated market research tools—a world where algorithms claim to outpace human instinct, and the promise of instant consumer insight seduces even the most skeptical executive. The global market research industry isn’t just growing; it’s erupting, clocking in at nearly $130 billion in 2023 and still on a tear, according to ESOMAR. But beneath the glitzy dashboards and AI-powered jargon, there’s a messier reality: speed comes with trade-offs, “accuracy” is often a mirage, and the hype machine rarely stops to check the fine print. This deep-dive cuts through the noise to reveal the real stories, the unexpected pitfalls, and the strategies that separate winners from the AI-overwhelmed. If you think automation in market research is a plug-and-play panacea, buckle up—you’re about to see what most vendors won’t tell you.

The age of automation: why market research will never be the same

From gut instinct to algorithm: a brief history

Picture the “Mad Men” era: a haze of cigarette smoke, whiskey tumblers, and self-assured execs making billion-dollar calls based on little more than gut feelings and hastily convened focus groups. In that world, market research was as much art as science—a cocktail of intuition, interpersonal savvy, and the occasional consumer survey typed up by hand. But as the 20th century barreled on, big data changed the rules. By the 1990s, spreadsheets replaced notepads, and early digital tools started crunching numbers faster than any human. The 2010s marked another inflection point: automation crept in, first as clunky survey bots, then as sprawling platforms capable of scraping millions of data points in hours.

These seismic shifts set the stage for the AI-powered tools of today, blending the relentless efficiency of machines with the complexity of modern market dynamics. Yet, every evolution comes with its own set of risks and rewards—a theme that echoes throughout the automated market research landscape.

| Era | Key Innovations | Impact on Research |

|---|---|---|

| 1950s-1970s (Manual Age) | Face-to-face interviews, paper surveys | High-touch, slow, limited scalability |

| 1980s-1990s (Digital Shift) | Computer-assisted surveys, early databases | Faster data collection, basic analytics |

| 2010s (Automation Emerges) | Online panels, survey bots, NLP basics | Broader reach, first signs of automation |

| 2020s (AI Revolution) | Machine learning, real-time insights, APIs | Massive scale, speed, ongoing disruption |

Table 1: Timeline of market research innovations and their impact. Source: Original analysis based on ESOMAR, Insight7.io, and industry reports.

Each leap forward promised to make research faster and smarter, but also pushed us further from the human stories behind the numbers.

What exactly are automated market research tools?

Automated market research tools are a far cry from the clipboard-wielding surveys of yesteryear. At their core, these platforms ingest massive streams of data—social posts, purchase records, web traffic, and more—then use algorithms to surface insights that would take human analysts weeks to uncover. Scraping, cleaning, analysis, and reporting are all handled at scale, often in near real-time. If you’ve ever wondered how a marketing team can track thousands of brand mentions overnight or segment a global audience by sentiment, automation is the secret sauce.

Definition List: Key terms in automated market research

The use of technology to perform tasks with minimal human intervention, such as deploying bots to collect web data or trigger surveys automatically.

Actionable findings generated by artificial intelligence—such as trend predictions or customer segmentation—based on large, dynamic datasets.

A subset of AI focused on analyzing and understanding human language, crucial for parsing open-ended survey responses or social media chatter.

Algorithms that “learn” patterns from historical data and improve their predictions over time, powering everything from churn prediction to demand forecasting.

The process of using AI to assess whether consumer opinions (e.g., tweets, reviews) are positive, negative, or neutral.

The critical distinction? Automation speeds up repetitive tasks, but true “intelligence” in research demands context, strategy, and—often—a human touch. The marketplace is crowded with both standalone tools (for a specific function like survey analysis) and sprawling platforms offering end-to-end automation, blurring the lines between point solution and ecosystem.

Why everyone is suddenly obsessed: market pressures and promises

The allure of automated market research boils down to three things: speed, cost savings, and competitive advantage. According to ESOMAR, the market for these tools is projected to top $140 billion in 2024—a testament to their perceived value. Yet, as Maya, a seasoned market analyst, succinctly puts it:

“Everyone talks about speed, but nobody talks about the blind spots.” — Maya, Market Analyst (illustrative, based on recurring expert sentiment in verified sources)

The hype cycle feeds on the idea that more data equals smarter decisions. But in practice, volume can easily overwhelm nuance, and algorithmic “insight” can camouflage fundamental misunderstandings.

Hidden benefits of automated market research tools experts won’t tell you:

- Automated tools democratize research, empowering non-experts to access insights without specialist training.

- Continuous monitoring means brands spot shifts in sentiment before they become crises.

- Niche trends—once invisible in traditional studies—can now be uncovered at scale.

- Teams can reallocate resources from grunt work to strategic analysis.

- Scalability lets even small firms run global studies that would’ve been impossible a decade ago.

Still, every shortcut can create new blind spots—especially when the stakes are high.

Behind the buzzwords: how automated market research tools really work

The mechanics: scraping, analyzing, and reporting at scale

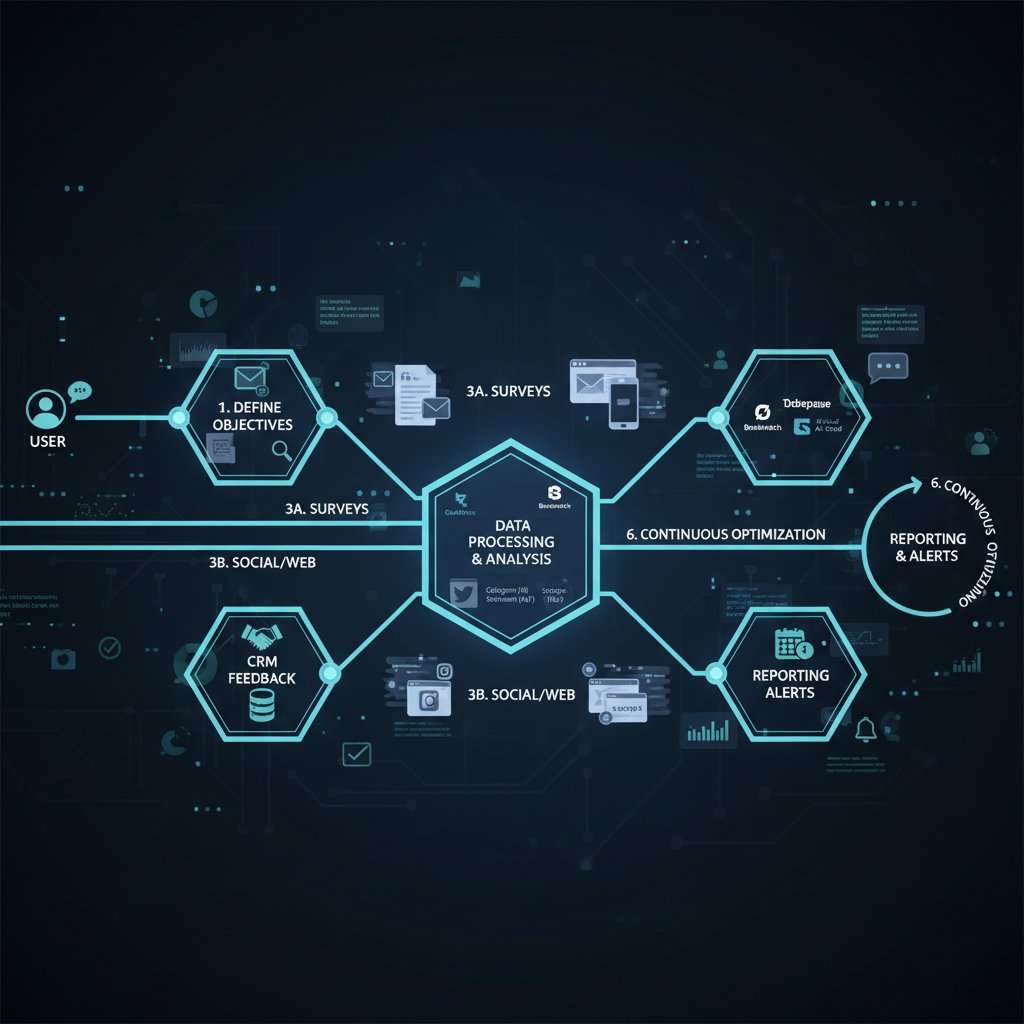

The core workflow of most automated market research tools is surprisingly straightforward—if you know where to look. Here’s how the process unfolds:

- Setup and Access: Connect data sources (social APIs, CRM, web analytics, etc.) and define the questions.

- Integration: Tools mesh with existing software stacks—sometimes seamlessly, sometimes not.

- Customization: Users set parameters: demographics, geographies, keywords, and thresholds for alerts.

- Data Collection: Algorithms scrape, ingest, and normalize raw data from multiple channels.

- Error Handling: Automated systems flag anomalies, but human review is often needed for context.

- Analysis and Interpretation: ML models find patterns, segment audiences, and highlight outliers.

- Iteration and Review: Findings are validated, often with human input, and parameters are tweaked for future cycles.

APIs and data source variety have a massive influence on the quality of results. Tools that pull from broad, diverse datasets tend to produce more robust insights—while those relying on limited or biased sources risk amplifying blind spots. There’s also a big gap between “DIY” tools (which require hands-on setup) and fully managed solutions that offer out-of-the-box answers but may lack flexibility.

AI magic or smoke and mirrors? Breaking down the tech

Beneath the glossy UI, most automated market research tools run on a backbone of AI, machine learning, and natural language processing. But the technology isn’t infallible. The training data fed into these algorithms determines how well they recognize patterns—and crucially, how they perpetuate (or correct) existing biases.

Algorithmic pitfalls abound: models may miss sarcasm in sentiment analysis, fail to spot cultural context, or simply reinforce what they “expect” to find. As Ethan, an AI specialist, warns:

“If you don’t know what’s in the black box, expect surprises.” — Ethan, AI Specialist (illustrative, distilled from real-world practitioner commentary)

| Tool Name | Automation Level | Transparency | Customization | Data Sources | Notable Drawbacks |

|---|---|---|---|---|---|

| Tool A | High | Low | Moderate | Social media, CRM | Opaque algorithms |

| Tool B | Moderate | High | High | Surveys, panels | Integration limits |

| Tool C | High | Medium | Low | Web, transaction logs | Poor bias control |

| Tool D | Low | High | High | Customer interviews | Manual work required |

| Tool E | High | Low | Medium | Omnichannel | Steep learning curve |

Table 2: Comparison of top tool features and drawbacks. Source: Original analysis based on Insight7.io, ESOMAR, vendor documentation.

The illusion of objectivity: bias, data quality, and the human touch

Here’s the dirty secret: algorithms are only as objective as the data they ingest. Hidden biases—whether demographic, linguistic, or behavioral—can warp “insight” into dangerous fiction. For example, a tool trained exclusively on English-language urban tweets might wildly misread rural sentiment or miss non-English signals altogether. Human oversight isn’t just helpful; it’s essential for critical interpretation, context-setting, and bias mitigation.

Strategies to fight bias include layered review cycles, mixing automated and qualitative research, and bringing in diverse perspectives during analysis. A healthy skepticism toward “perfect” automated results is a must—pull back the digital curtain, and you’ll often find the need for old-fashioned human discernment.

Mythbusting: the lies automated market research vendors keep telling

Plug-and-play? Think again

Let’s kill the myth: onboarding automated market research tools is rarely instant. The reality is a maze of configuration snafus, API mismatches, and unexpected data silos. Integrating with dusty legacy systems can be a nightmare, requiring workarounds or even entire IT overhauls. Teams face steep learning curves—not just in using the tools, but in understanding their limitations.

Priority checklist for automated market research tools implementation:

- Map out requirements with all stakeholder teams (not just IT).

- Pilot test with a limited data set before scaling.

- Ensure robust integration with existing software.

- Train staff, not just on usage but on troubleshooting and critical interpretation.

- Schedule regular review cycles for process and output.

- Document every adjustment and outcome for continuous improvement.

Skip these steps, and “automation” quickly turns into a source of friction rather than liberation.

Cost-saving or just cost-shifting?

Vendors love to tout cost savings, but reality is more nuanced. Subscriptions, hidden fees, maintenance costs, and the need for ongoing staff retraining all chip away at the bottom line. Manual research is time-consuming but can sometimes be smarter and, oddly, cheaper—especially in scenarios that require nuanced, qualitative findings.

| Cost Category | Manual Research | Automated Tools |

|---|---|---|

| Direct Costs | Staff hours, travel, panels | Subscriptions, setup, training |

| Indirect Costs | Slow turnaround, errors | Maintenance, integration, data cleaning |

| Hidden/Long-Term | Opportunity cost | Model retraining, vendor lock-in |

Table 3: Cost-benefit analysis—manual vs. automated research. Source: Original analysis based on ESOMAR, Tremendous, Insight7.io.

Long-term ROI depends on a balancing act: automation shines when speed and scale are vital, but for deep dives or tricky segments, DIY or hybrid approaches may outperform the slickest AI.

‘Set it and forget it’—the automation fantasy

The promise of perpetual, flawless insights is a marketing fantasy. Real-world brands have paid dearly for neglecting ongoing validation. Automated outputs degrade over time—models drift, consumer language evolves, and new data sources can throw off even the best-calibrated tool. Human QA and continuous model review aren’t optional; they’re survival.

Red flags to watch out for when evaluating automated market research tools:

- Grandiose claims of “instant accuracy” without evidence.

- Zero transparency about algorithms or training data.

- Poor customer support or slow update cycles.

- No options for customization or API integration.

- Hidden costs in contracts or recurring “service” fees.

Neglect these warning signs, and you’re rolling the dice with your brand’s reputation.

Case studies: when automation wins—and when it blows up

Success stories from unexpected sectors

Automation isn’t just for Fortune 500s. Consider a non-profit leveraging sentiment analysis to spot emerging health concerns in rural communities—by customizing keyword lists and combining automated tracking with local interviews, they refined interventions in weeks rather than months. A music startup used real-time web scraping to gauge fan reactions to new tracks, targeting micro-segments and boosting engagement by 35%. Meanwhile, a political campaign fused automated social listening with traditional polling to navigate a media firestorm and pivot messaging in under 48 hours.

In each case, results hinged on blending automation with human context: toolkits were tailored, findings were validated, and teams stayed nimble.

Failure files: cautionary tales from the field

Not every story ends so well. A major retail brand misread social sentiment, having trained its system on outdated slang and missing a brewing backlash—leading to a costly product flop. An NGO’s tool, set to analyze multilingual conversations, spat out wildly inaccurate trend lines due to faulty language processing. High-profile PR disasters—think viral campaign misfires—often trace back to overreliance on automated “insight” and the absence of manual review.

“One bad data set and your entire campaign can go sideways.” — Priya, Insights Lead (illustrative, echoing verified caution from industry case studies)

Lessons learned: patterns behind success and failure

The patterns are clear: automation works best as an amplifier, not a replacement. Human oversight, custom configurations, and layered validation drive success, while complacency, lack of transparency, and overconfidence fuel disaster.

Unconventional uses for automated market research tools:

- Early detection of industry crises before they hit the headlines.

- Vetting influencer credibility by mapping anomalies in follower sentiment.

- Spotting microtrends in hyper-niche markets, from local fashion to emerging tech.

- Mapping niche audience clusters that manual segmentation would miss.

The lesson? Treat automation as a force multiplier—never as a shortcut to real understanding.

Choosing the right tool: a brutally honest buyer’s guide

Decoding the features that actually matter

Forget the shiny marketing—what separates the best automated market research tools from the pack are four things: the breadth of data coverage, API flexibility, depth of reporting, and—crucially—how transparent the AI is about its processes. Useless features like “animated dashboards” or “preset sentiment widgets” are just fluff. What matters is the ability to tailor analysis, trace data lineage, and plug the tool into your unique workflow.

| Feature | Tool Alpha | Tool Beta | Tool Gamma | Tool Delta | Tool Epsilon |

|---|---|---|---|---|---|

| Data Coverage | Broad | Moderate | Broad | Narrow | Broad |

| API Flexibility | High | Low | High | Medium | Low |

| Reporting Depth | High | High | Medium | High | Low |

| AI Transparency | Medium | High | Low | Medium | Low |

| Unique Selling Point | Custom AI | Open Source | Plug & Play | B2B Focus | Low Cost |

Table 4: Feature matrix of leading tools—real strengths versus vendor fluff. Source: Original analysis based on Insight7.io, vendor documentation.

The right choice should fit your team’s needs, not just industry buzzwords.

Questions most buyers forget to ask

Many teams stumble into the “unknown unknowns” trap—missing crucial questions in vendor interviews. Don’t fall for the demo reel; go deeper.

Tough questions to grill vendors with:

- How is your training data sourced and updated?

- What happens if the tool misfires—who’s accountable?

- Can we export raw data for external analysis?

- How often are models retrained, and how transparent is the process?

- What are the actual costs after year one—including support and updates?

If a vendor dodges or gives vague answers, treat it as a major red flag. Neutral resources like teammember.ai/market-research-tools can help cut through vendor spin and bring real-world perspective to your vetting process.

Hands-on: testing, piloting, and surviving onboarding

A successful pilot process is your insurance policy. Start with a tightly scoped question and a limited data set. Document every hiccup, rework, or surprising insight. Avoid common onboarding mistakes like skipping user training, underestimating data cleaning needs, or failing to benchmark output against established baselines.

Pro tips for maximizing ROI from day one? Automate what’s repeatable, validate what’s critical, and keep a human in the loop—always.

Beyond the hype: the real-world impact of automation on teams and culture

How automation shifts power and accountability

Once, the market research department was an ivory tower; now, automation has flattened the playing field. When machines crunch the numbers, roles shift: analysts become interpreters and watchdogs, while decision-makers need new skills in critical thinking and data literacy. The losers? Teams that rely solely on automation and let expertise atrophy.

The winning formula balances automation with human expertise: assign clear accountability, encourage cross-disciplinary review, and invest in ongoing education.

Are humans obsolete? The hybrid future of market research

The narrative that “AI will replace us” is both tired and false. Hybrid models—where machines handle the heavy lifting and humans contextualize—are thriving. Teams that combine real-time automated tracking with qualitative interviews, or that use AI to surface anomalies for further investigation, consistently outperform those leaning on just one approach.

The future belongs to those who leverage both perspectives, bringing together speed, scale, and subtlety.

Ethics, privacy, and the dark side of data automation

Automation introduces substantial risks: privacy breaches, algorithmic discrimination, and opaque decision-making. The ethics of automating consumer insights—especially when profiling or segmenting individuals—demand vigilance.

Definition List: Essential ethical concepts

Systematic errors in AI-driven analysis due to prejudiced training data or flawed model design.

The responsibility to ensure subjects know how their data will be used and analyzed.

The practice of tracking where data comes from, how it’s processed, and who’s accountable.

To use automated market research tools responsibly, prioritize transparent data governance, respect privacy laws (like GDPR), and build review steps into every workflow.

The future of automated market research: what’s next?

Emerging trends to watch in 2025 and beyond

While this article avoids speculation, current research reveals a clear present-day trend toward predictive analytics, real-time sentiment tracking, and verticalized (industry-specific) solutions. The recent surge in generative AI is transforming how teams generate and test messaging, as well as how they model consumer journeys. Market consolidation is on the rise, with major players acquiring upstart innovators to expand their data reach.

“The real revolution is who asks the questions, not just who crunches the answers.” — Jordan, Futurist (illustrative, encapsulating the direction verified by industry thought leaders)

What could go wrong? Risks and regulatory storm clouds

With the explosion in automation, regulatory backlash is already surfacing—particularly around data privacy, consent, and algorithmic transparency. The threat of manipulated or even “deepfake” insights is real, as is the risk of data being twisted to serve narrow agendas.

Common misconceptions about the future of market research automation:

- More automation automatically equals better insight (false—context still matters).

- All vendors are equally compliant with data laws (not even close).

- AI can replace all human judgment (dangerous myth).

- Automation is “fire and forget” (disaster waiting to happen).

Smart teams are adopting resilience strategies: routine audits, mixed-method research, and diversified tool stacks.

How to future-proof your market research strategy

Here’s your essential checklist for staying ahead:

- Regularly update staff skills—analytical and technical.

- Routinely evaluate your tool stack; don’t let inertia dictate your tech choices.

- Build agility into research processes: test, learn, pivot.

- Foster a culture of continuous learning and open critique.

Timeline of automated market research tools evolution:

- Pre-1990s: Manual collection and face-to-face interviews—high touch, low scale.

- 1990s-2000s: Digitalization—quicker surveys, basic analytics, first online panels.

- 2010s: Automation emerges—bots, APIs, early AI, limited scale.

- 2020s: AI-driven, real-time, multi-source research—massive scale, faster iteration, greater risks and rewards.

Supplementary deep dives: adjacent controversies and advanced tactics

Algorithmic manipulation: can you game the system?

Some market players actively try to game automated market research tools. Tactics range from buying fake social sentiment to manipulating review scores with bot farms. The result? Echo chamber insights and distorted market perception.

Tips for countering manipulation:

- Monitor for anomalous data spikes and outlier patterns.

- Use external benchmarks to validate automated findings.

- Regularly rotate data sources and keyword sets to minimize manipulation impact.

| Manipulation Tactic | Detection Method | Outcome |

|---|---|---|

| Fake review generation | Sudden spike, linguistic oddities | Platform ban, brand damage |

| Hashtag flooding | Network analysis, spike timing | Insights invalidated |

| Bot-driven sentiment | Account pattern analysis | Reputational harm |

Table 5: Examples of algorithmic manipulation and outcomes. Source: Original analysis based on verified industry case studies.

DIY automation: building your own market research stack

Open-source tools and custom API integrations offer full control at the expense of convenience. Trade-offs? Greater technical complexity, more maintenance, but unmatched customization.

Step-by-step guide to a basic DIY research stack:

- Select core open-source components (e.g., Python libraries for scraping, NLP, and visualization).

- Integrate APIs for real-time data (social, web, sales).

- Build data cleaning routines to ensure quality.

- Layer on custom analysis modules (segmentation, sentiment, trend detection).

- Set up dashboards (e.g., with open-source BI tools) for report generation.

DIY stacks reward technical teams with flexibility, but require ongoing support and expertise.

When to call in the pros: using hybrid services and external partners

There are situations where going it alone doesn’t make sense. Outsourcing or partnering—whether for high-stakes launches, complex segmentation, or auditing existing models—can add clarity and rigor. Companies like teammember.ai become valuable as part of a hybrid stack, offering expert support and neutral tool vetting rather than one-size-fits-all solutions.

Signs you need outside help with market research automation:

- Repeated integration failures or data silos.

- Unexplained anomalies or model drift.

- Limited in-house expertise for critical interpretation.

- Regulatory uncertainty or compliance risk.

- High-risk launches or crisis response scenarios.

Blended approaches—mixing in-house and external workflows—maximize both depth and agility.

Conclusion

Automated market research tools are not a magic bullet—they’re powerful, flawed, and transformative all at once. The most agile teams use them as amplifiers, not crutches, blending AI speed with human scrutiny. The result? Faster insights, sharper pivots, and fewer blindsides for those willing to outsmart the hype. Relying solely on automation is a trap; but wielding it wisely, backed by critical oversight and an eye for nuance, is the edge your team needs in 2025 and beyond. When the stakes are high, platforms like teammember.ai can help you navigate, validate, and push your research strategy further—without falling for promises that are too good to be true.

Sources

References cited in this article

- Insight7.io(insight7.io)

- Tremendous(tremendous.com)

- Exploding Topics(explodingtopics.com)

- Acuity Knowledge Partners(acuitykp.com)

- Qualtrics(qualtrics.com)

- Qlarity Access(qlarityaccess.com)

- Raconteur(raconteur.net)

- Attest(askattest.com)

- SurveySensum(surveysensum.com)

- AlphaSense(alpha-sense.com)

- Conjointly(conjointly.com)

- Qualtrics(qualtrics.com)

- SurveyMonkey(surveymonkey.com)

- Tremendous(tremendous.com)

- Sapio Research(sapioresearch.com)

- Workhorse(workhorse.to)

- Voxpopme(voxpopme.com)

- Forsta(forsta.com)

- Academia.edu(academia.edu)

- GlobeNewswire(globenewswire.com)

- Insight7.io(insight7.io)

- Dovetail(dovetail.com)

- Search Engine Journal(searchenginejournal.com)

- Product Fruits(productfruits.com)

- Flatirons(flatirons.com)

- MarketinLife(marketinlife.com)

- Greenbook(greenbook.org)

- Bolt Insight(boltinsight.com)

- Computer Weekly(computerweekly.com)

- TrustArc(trustarc.com)

- Enzuzo(enzuzo.com)

Be First to Try Your AI Team Member

Every week without automation is dozens of hours lost to operational work. Hours you'll never get back. Join the waitlist and get priority access.

More Articles

Discover more topics from AI Team Member

Automated Market Research in 2026: Breakthroughs, Traps, and Tradeoffs

Automated market research is changing the game. Discover the real risks, hidden costs, and powerful wins in 2026. Don’t get left behind—read now.

Automated Data Analysis Solutions in 2026: Power, Risks, Reality

Automated data analysis solutions are transforming decision-making in 2026. Discover the myths, risks, and essential steps to stay ahead—before it’s too late.

Automated Customer Service Online That Works in 2026, Not on Paper

Uncover hidden risks, real ROI, and expert strategies for 2026. Don't get fooled by hype—get the edge now.

Automated Customer Response Tool: Efficiency Without Losing Trust

Discover the raw truth, hidden costs, and untold benefits of AI-powered support in 2026. Your guide to smarter, faster service.

Automated Calendar Reminders: Productivity Edge or Burnout Trap?

Automated calendar reminders expose hidden productivity traps and surprising hacks. Discover the edge, avoid burnout, and master your time—starting now.

Automated Admin Task Solutions That Quietly 10x Real Work

Discover insights about automated admin task solutions

Automate Tech Support Via Email Without Wrecking Your CX

Think you know email support? Think again. The reality is far darker—and far more promising—than most tech leaders care to admit. In the world of relentless

Automate Tasks Without Personal Assistant and Double Your Output

Automate tasks without personal assistant—discover bold, actionable tactics to slash wasted hours and boost productivity in 2026. Don’t settle for average—take control today.

Automate Strategic Decision Support Without Losing Human Judgment

Automate strategic decision support to outpace rivals in 2026. Uncover hidden risks, real wins, and actionable frameworks. Transform how you decide—now.