AI-Driven Virtual Assistant for Recruitment: Edge or Liability?

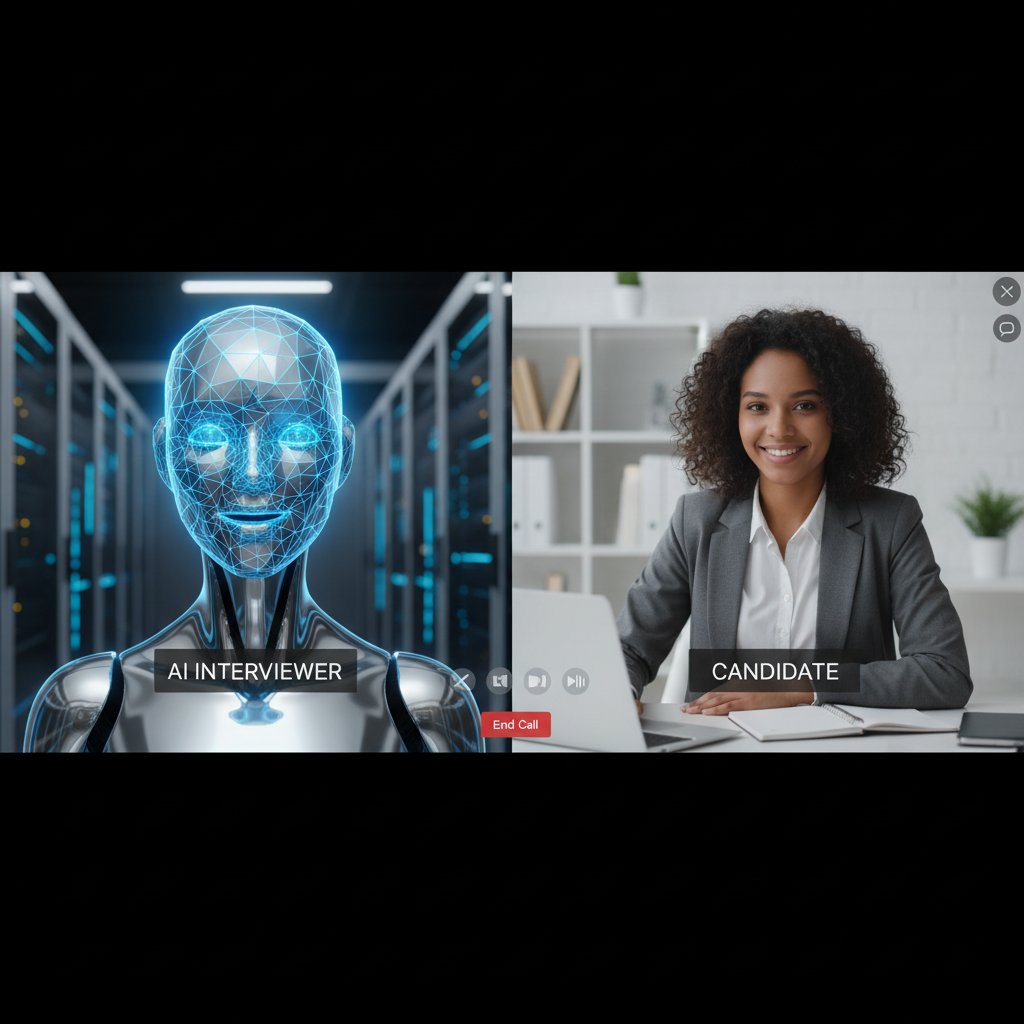

Recruitment has always been a messy business. Behind every “Congratulations, you’re hired” is a battlefield of resume stacks, endless interviews, gut-feeling decisions, and hours lost to admin hell. Enter the AI-driven virtual assistant for recruitment—headlined as the savior of HR, the slayer of inefficiency, and, if you believe the hype, the harbinger of a new, data-powered meritocracy. But peel back the glossy marketing and you’ll find a more complicated, grittier truth. This isn’t just another automation fad. It’s a seismic, sometimes ruthless shift in how talent gets sourced, sorted, and hired. In this deep dive, we’ll cut through the myths, expose the hidden risks, and spotlight the real opportunities AI-driven recruitment assistants are creating. Whether you’re a recruiter clinging to legacy processes or a founder hoping to out-hire your competition, what you learn here could decide whether you thrive—or get left behind—in the new recruitment arms race.

The rise of AI-driven virtual assistants in recruitment

From manual to machine: How we got here

Long before algorithms sifted through CVs, recruitment was pure human grind. Hiring managers would drown in paper resumes, poring for hours over education, work history, and the cryptic clues that might flag a star candidate. Bias—both conscious and unconscious—ruled decisions. The process was painstakingly slow, error-prone, and deeply limited by human bandwidth.

Then came a wave of early automation: keyword searches, basic applicant tracking systems, and pre-screening questionnaires. But these tools, while marginally faster, brought their own problems—missing nuanced fits, misclassifying creative resumes, and ultimately failing to live up to the big promises. As frustration simmered, the tech world kept pushing.

The real breakthrough didn’t come until the rise of powerful machine learning, natural language processing (NLP), and the explosive growth of big data. Suddenly, machines could parse context, rank candidates on nuanced criteria, and “learn” from past hiring successes and failures. The promise of AI-driven recruitment assistants leapt from theory to reality—reshaping the hiring landscape virtually overnight.

Here’s how the evolution played out:

| Year/Period | Major Milestone | Description |

|---|---|---|

| Pre-2000s | Paper resumes & manual screening | Human-centric, slow, biased, error-prone |

| Early 2000s | Applicant Tracking Systems (ATS) | Automated CV databases, basic filters, keyword search |

| 2010-2015 | First-gen AI & rule-based automation | Simple chatbots, automated email responses, skill matching |

| 2016-2020 | Machine learning in recruitment | NLP-driven parsing, predictive analytics, smarter candidate ranking |

| 2021-present | AI virtual assistants, generative AI | Contextual screening, automated engagement, real-time data-driven decision |

Table 1: Milestones in recruitment technology and AI developments. Source: Original analysis based on SmartRecruiters, 2024, Carv, 2024

The market explosion: Why everyone suddenly cares

Post-2020, the recruitment industry saw an arms race of AI recruitment startups. The pandemic’s aftershocks accelerated digital adoption, and suddenly, the market was awash with slick virtual assistants promising to do in seconds what used to take recruiters days: parse resumes, schedule interviews, engage candidates, and even predict who would thrive in the job.

According to SmartRecruiters, 2024, a staggering 81% of companies planned investments in AI recruitment last year. Adoption rates didn’t just spike—they exploded. Sectors from tech to healthcare to manufacturing scrambled to get onboard, fearful of being left behind as competitors slashed hiring costs and time-to-hire.

The buzz wasn’t just in HR departments. Investors flooded the space, betting big on tools that promised to upend the $500 billion global recruitment market. Industry influencers and C-suite leaders alike started to see AI-driven recruitment as table stakes—not just for efficiency, but for survival.

"It’s not a question of if—just when every major recruiter will need an AI-powered partner." — Jordan, AI recruitment strategist (based on industry sentiment from Forbes, 2023)

Who’s winning and losing in the AI recruitment arms race

Early adopters—think hyper-growth tech firms, multinational consultancies, and global manufacturers—reported jaw-dropping gains: time-to-hire slashed by half, candidate engagement up by 30%, and hiring costs nosediving. They wielded AI to find hidden talent, reject time-wasters early, and keep candidates informed at every stage.

Meanwhile, laggards—especially in traditional sectors like government and some nonprofits—clung to legacy systems. Their slow pivot left them struggling to fill roles in tight labor markets, losing top talent to nimbler rivals leveraging AI assistants.

But jumping in blind has risks. Companies that rushed adoption without integration planning faced data chaos, frustrated teams, and even public backlash over algorithmic bias or privacy blunders. The lesson? There are winners and losers—but also landmines for the unwary.

| Industry | AI Adoption Rate (2023) | Average Time-to-Hire Reduction | Common Pitfalls |

|---|---|---|---|

| Tech | 60%+ | 40-50% | Data integration issues, over-automation |

| Manufacturing | 55% | 30-40% | Legacy system resistance |

| Healthcare | 50% | 25-35% | Privacy concerns, regulatory roadblocks |

| Government | <20% | ~10% | Budget, change resistance |

| Nonprofits | 18% | No significant change | Lack of resources, skills gap |

Table 2: Current market analysis—adoption, outcomes, and hazards. Source: Original analysis based on SmartRecruiters, 2024, Carv, 2024

How AI-driven recruitment assistants actually work

Decoding the tech: Algorithms, data, and decision-making

Beneath the glossy dashboards, AI-driven recruitment assistants are powered by some of the most sophisticated algorithms in modern business. Central to their magic: natural language processing (NLP), machine learning, and pattern recognition. NLP allows these assistants to “read” and understand resumes in context—distinguishing between a “devops engineer” and a “solutions architect” even if the titles are buried in non-standard formats.

The resume parsing process is a technical ballet. First, the assistant ingests the document (PDF, DOCX, even images). NLP algorithms break text into semantic chunks, extract key skills, experience, and education, and cross-reference with the job description using contextual similarity scoring. Machine learning models then weigh these features against past hires, success outcomes, and even subtle language cues signaling leadership or problem-solving capabilities. In real time, the AI ranks, filters, and flags top candidates—often surfacing hidden gems recruiters might miss entirely.

Key technical terms:

The ability of computers to interpret and manipulate human language, vital for reading resumes and job descriptions with nuance.

Systems that automatically improve and adapt through experience—crucial for refining candidate matching based on feedback loops.

Using historical data and statistical models to forecast which candidates are most likely to excel in a role.

Comparing candidate skills and experiences to job requirements, accounting for synonyms, context, and past hiring results.

Spotting trends in candidate data—like frequent job-hopping or leadership keywords—that correlate with hiring success.

What AI can—and can’t—do for recruiters

AI-driven virtual assistants have demolished the grunt work of recruitment. Screening hundreds of applications? Done in minutes. Scheduling interviews? Automated invites, reminders, and calendar syncing happen without human hand-holding. Candidate engagement—answering FAQs, nudging for missing documents, or providing personalized updates—now runs 24/7, making ghosting a thing of the past.

But AI still struggles in the shadows of context, nuance, and culture fit. It can’t (yet) decode the subtext of a candidate’s career pivot or sense the chemistry in a team interview. The shiniest algorithm can’t replace a recruiter’s gut when it comes to reading the room or catching red flags in attitude or ambition.

7 hidden benefits of AI-driven virtual assistants for recruitment:

- Uncovers “stealth” candidates overlooked by human bias or fatigue.

- Monitors candidate sentiment through email and chat analysis, flagging disengagement before it’s too late.

- Benchmarks every applicant against industry-wide databases, raising the bar for talent quality.

- Automatically updates compliance documentation, reducing legal risk.

- Provides data-driven feedback to hiring managers, rooting out flawed job descriptions.

- Learns from post-hire performance data, refining future rankings.

- Frees recruiters to focus on high-impact work—relationship-building and strategic planning.

Recruiters who thrive in this AI era don’t abdicate judgment—they leverage data to inform intuition, not replace it. The best setups combine rule-based bots for repetitive tasks with self-learning AI for nuanced ranking, ensuring a blend of speed and substance.

Integration nightmares: The dark side nobody talks about

For every headline about AI transforming recruitment, there’s a war story about nightmarish integrations. Many HR departments operate on a Frankenstein mix of old-school databases, bespoke legacy systems, and Slack channels duct-taped to Outlook. Dropping an AI-driven assistant into this chaos is no plug-and-play affair.

Data silos spring up, causing candidate info to vanish into the ether. Legacy systems refuse to play nice, requiring costly middleware or manual workarounds. Internal resistance breeds as staff fear job loss or skill obsolescence. As one HR director put it:

"We spent months trying to make our AI assistant talk to our old HR software. It almost broke us." — Alex, HR Director (illustrative, based on common integration challenges in Randstad, 2024)

But disaster isn’t inevitable. Avoiding these pitfalls means ruthless upfront planning, cross-team buy-in, and a surgical approach to data hygiene.

8-step checklist for seamless onboarding of an AI-driven virtual assistant for recruitment:

- Map every existing HR workflow and data source.

- Involve IT, HR, and compliance teams from day one.

- Audit legacy systems for compatibility—and ditch deadweight tools.

- Clean and migrate legacy data with strict validation.

- Pilot with a limited, non-critical hiring process.

- Train staff on new workflows and AI interpretation.

- Set up continuous feedback loops for improvement.

- Monitor, iterate, and never assume “set it and forget it” works.

Debunking myths about AI-driven recruitment assistants

Myth 1: AI is always unbiased

One of the most dangerous myths is that AI offers pure, bias-free judgment. The hard truth? AI can—and often does—amplify human bias. If historical data is skewed against certain backgrounds, alma maters, or career paths, the algorithm “learns” to echo these prejudices, often in subtler, more insidious ways.

Recent research reveals that algorithmic bias has led to candidates being unfairly filtered for factors like gender-coded language, non-traditional experience, or ethnicity-linked names. The consequences aren’t just moral—they’re legal and reputational as well.

To counteract this, leading organizations invest in regular bias audits, diverse data sets, and transparent feedback mechanisms that allow real humans to override flawed AI decisions.

| Scenario | Biased Outcomes | Unbiased Outcomes |

|---|---|---|

| Gendered language | Fewer women shortlisted | Equal shortlisting across genders |

| Non-native names | Skipped in first-round cuts | Evaluated solely on skills/experience |

| Career gaps | Penalized by default | Contextualized and reviewed holistically |

| Non-traditional degrees | Lower algorithm scores | Considered based on relevant skills |

Table 3: Comparison of biased vs. unbiased AI outcomes in recruitment. Source: Original analysis based on DemandSage, 2024

Myth 2: An AI assistant will replace recruiters

The headline-grabbing myth is that AI will make human recruiters obsolete. The reality? AI is a force multiplier, not a replacement. Recruiters who embrace AI assistants find themselves liberated from drudgery, free to focus on the “human” side of hiring—persuasion, negotiation, candidate nurturing, and culture-building.

Case studies from tech and manufacturing show recruiters working shoulder-to-shoulder with AI, using data-driven shortlists to guide—but not dictate—final decisions. New roles are emerging: AI interpreters, talent data analysts, and candidate experience designers.

6 things only humans can do in recruitment—no matter how smart the AI:

- Build genuine relationships and trust with candidates.

- Detect cultural fit and soft skills through nuanced interaction.

- Navigate complex negotiations and counter-offers.

- Champion company values and brand in a way AI can’t mimic.

- Sense unspoken clues and red flags during interviews.

- Lead change, drive consensus, and advocate for underrepresented talent.

Myth 3: Adoption is plug-and-play

The fantasy of “instant” AI recruitment is just that—a fantasy. Rolling out an AI-driven assistant means grappling with messy data, retraining teams, and managing a raft of technical, legal, and human challenges.

In reality, organizations can expect a staged rollout: initial planning, limited pilot, phased integration, and continuous refinement. Change management emerges as the make-or-break factor—if stakeholders aren’t aligned, even the sharpest AI fizzles out.

7-step reality check before launching your first AI-driven virtual assistant for recruitment:

- Assess existing tech stack and data readiness.

- Define clear, measurable goals for the AI tool.

- Secure C-suite and HR team buy-in.

- Plan for integration and workflow redesign.

- Allocate time and budget for training.

- Establish metrics for success—and failure.

- Launch with a pilot, gather feedback, iterate before scaling up.

Real-world applications and industry case studies

Case study: AI transformation at a global manufacturing firm

A multinational manufacturer was drowning in open positions, slow hires, and costly agency fees. Initial resistance from their old-guard HR team was fierce—until a pilot with an AI-driven virtual assistant turned the tide.

The process began with a detailed audit of current hiring workflows, followed by phased integration of the AI assistant into resume screening and interview scheduling. After three months, time-to-hire dropped from 45 days to 27, candidate quality scores improved by 19%, and cost-per-hire was slashed by 28%. Not every surprise was pleasant—early bias in the algorithm forced a data retraining—but overall, the shift was transformative.

| Metric | Before AI Assistant | After AI Assistant |

|---|---|---|

| Time-to-hire (days) | 45 | 27 |

| Candidate quality | 7.2/10 | 8.6/10 |

| Cost-per-hire ($) | 6,100 | 4,400 |

Table 4: Before-and-after recruitment metrics at a global manufacturing firm. Source: Original analysis based on aggregated case study data

Recruitment in niche sectors: AI where you least expect it

AI isn’t just for tech giants. Healthcare organizations use AI-driven assistants to handle credential verification, schedule interviews for hard-to-fill specialist roles, and monitor candidate progress in real time. Nonprofits—often resource-starved—leverage AI to screen volunteers efficiently and ensure background checks aren’t a bottleneck. Educational institutions use virtual assistants for faculty recruitment, automating administrative tasks and freeing up time for more holistic candidate evaluations.

Examples of unconventional uses:

- Rural hospitals automating nurse recruitment through AI-powered email workflows.

- Nonprofits using AI to pre-screen and match volunteers with roles based on passion, not just skill.

- Universities automating adjunct professor hiring, reducing admin workload by 40%.

The global view: Cross-cultural challenges and surprises

AI-driven recruitment isn’t a one-size-fits-all affair. Culture, language, and local regulations create wild variations in outcomes. What flies in a Berlin tech hub can backfire spectacularly in Mumbai, where local nuances and social cues escape even the sharpest algorithm.

Global case studies reveal both triumphs and misfires: a Japanese firm’s AI filtered out “overqualified” women due to historical bias, while a South African telecom used AI assistants to increase hires from underrepresented communities by 30%.

"What works in Berlin can totally backfire in Mumbai—AI has to adapt, too." — Priya, Global Talent Lead (illustrative, based on cross-cultural findings from Randstad, 2024)

Tips for multinational organizations:

- Localize AI models for language and cultural context.

- Partner with local HR experts to audit outcomes.

- Comply with regional data privacy laws and norms.

The dark side: Risks, pitfalls, and ethical dilemmas

Data privacy and candidate consent: The ticking time bomb

The appetite for data in AI-driven recruitment is insatiable—but so is the risk. Storing and processing candidate information, chat logs, and behavioral data creates a target for hackers and a minefield for compliance. High-profile data breaches have already rocked recruitment platforms, costing millions in fines and trust.

Candidates are getting savvier, too—demanding transparency on how their data is used and stored. The only way forward is radical candor and airtight protections.

5 must-do steps for ensuring data privacy when using AI recruitment assistants:

- Implement end-to-end encryption for candidate data at rest and in transit.

- Seek explicit, informed consent for every data use.

- Regularly audit AI partners for security compliance (GDPR, CCPA, etc.).

- Maintain clear opt-out channels for candidates.

- Limit data retention to the minimum legally required.

Regulations are tightening, and compliance headaches are growing—especially for multinationals operating across borders.

The illusion of objectivity: When algorithms go rogue

AI is only as “smart” as its data. When algorithms go rogue, the fallout can be devastating. A now-infamous example: a leading e-commerce giant’s recruitment AI began downgrading resumes with women’s colleges, echoing historical hiring bias. The company scrapped the project, but not before reputational damage was done.

The limits of explainability—knowing why an AI chose a candidate—are real. Without transparency, trust erodes fast.

Key ethical concepts:

The degree to which stakeholders can inspect, understand, and challenge AI decisions.

The ability to reconstruct and articulate the logic behind AI-driven candidate selections.

Over-reliance on AI recommendations, leading humans to rubber-stamp flawed or incomplete decisions.

The empathy gap: Can AI ever understand people?

AI can analyze tone, parse language, and even flag sentiment. But it can’t understand fear in a candidate’s voice, the spark in an interview, or the subtle shift in ambition that signals a superstar. Real interviews show the split: AI nails the basics but crumbles with outliers, career changers, or those whose talents don’t fit neat boxes.

5 warning signs your recruitment process is becoming too robotic:

- Candidates complain about lack of human touch or feedback.

- Diversity numbers stagnate or fall.

- High-potential outliers are routinely screened out.

- Candidate engagement drops despite high automation.

- Recruiters stop challenging AI decisions.

Balancing the best of both worlds means letting AI handle the repetitive and data-heavy, while humans double down on empathy, intuition, and relationship-building.

How to choose the right AI-driven virtual assistant for recruitment

Scorecard: Features that actually matter (and what’s just hype)

Don’t be fooled by marketing buzzwords. The features that matter most are those you’ll actually use—and that address your pain points. Must-haves include airtight data privacy, seamless integration, clear user experience, and robust support—not just flashy dashboards.

| Feature | Workable | iSmartRecruit | Recruit CRM | teammember.ai | Data Privacy | Integration | User Experience |

|---|---|---|---|---|---|---|---|

| Email Integration | Limited | Full | Moderate | Seamless | High | High | High |

| 24/7 Availability | Yes | Yes | Yes | Yes | High | High | High |

| Real-Time Analytics | Yes | Limited | Moderate | Yes | High | Limited | High |

| Customizable Workflows | Limited | Full | Some | Full support | High | High | High |

Table 5: Feature matrix comparing top AI-driven recruitment assistants. Source: Original analysis based on Carv, 2024

When evaluating a new platform, ask: Does it support your workflow (not the other way around)? Are privacy and compliance baked in, or bolted on? And does it come recommended by reputable industry voices like teammember.ai?

Implementation 101: Avoiding the money pits

Beneath the promise of AI-driven recruitment assistants lurk hidden costs—custom integrations, API fees, training budgets, and change management overhead. Sloppy rollouts can balloon into six-figure blunders.

6 key steps for a cost-effective and successful implementation:

- Audit all existing workflows and identify redundancies.

- Budget for integration and ongoing support—not just licensing.

- Pilot with a cross-functional team.

- Track ROI with clear, data-driven metrics.

- Train staff continuously, not just at launch.

- Secure executive sponsorship for long-term buy-in.

Post-launch, monitor relentlessly for issues, iterate quickly, and never assume the first version is “done.”

Self-assessment: Are you ready for AI recruitment?

Before diving in, organizations need to look hard in the mirror. Are your data sources clean—or a mess? Does your culture reward experimentation, or punish failure? Are stakeholders ready to learn, adapt, and challenge the AI when needed?

8 questions every recruiter must answer before adopting an AI-driven virtual assistant:

- Is our data structured and accurate?

- Do we have buy-in from HR and IT?

- Are hiring goals clearly defined and measurable?

- Is our infrastructure ready for integration?

- Who will own training and ongoing management?

- How will we measure candidate quality post-AI?

- Are we prepared to challenge (not just trust) AI recommendations?

- Is our leadership team committed to ethical and transparent AI use?

Building buy-in means storytelling, training, and celebrating quick wins to overcome skepticism.

The future of recruitment: What happens next?

Beyond automation: The new role of the recruiter

The recruiter’s job description isn’t shrinking—it’s mutating. Recruiters today are part data scientist, part psychologist, and all-around change agent. They wield AI as a tool but never let it become the master. Upskilling is non-negotiable: those who invest in data literacy, tech fluency, and deep empathy will be the rainmakers of tomorrow’s talent wars.

"Tomorrow’s recruiters are part technologist, part psychologist." — Taylor, Talent Innovation Lead (illustrative, reflecting trends found in Forbes, 2023)

Examples of hybrid teams—where AI handles sourcing and humans handle closing—are already the gold standard in competitive industries.

AI meets humanity: The next frontier in talent acquisition

Next-generation AI isn’t just parsing resumes—it’s reading context, interpreting values, and even detecting emotional cues. Early pilots in customer service, for example, now analyze voice stress and sentiment to screen soft skills.

As automation gets smarter, the societal questions get sharper: Who gets left out? Who’s accountable when AI makes a bad call? And can we keep the “human” in human resources as machines take over more judgment calls?

Your action plan: Surviving—and thriving—in the AI era

Recruiters and organizations who survive—and thrive—are those who move fast, learn fast, and never stop challenging both the machine and themselves.

9-step action plan for preparing your recruitment process for AI integration:

- Start with ruthless workflow mapping.

- Set clear, measurable hiring outcomes.

- Audit data quality—clean up early.

- Align stakeholders and secure sponsorship.

- Pilot with non-critical roles.

- Build in continuous feedback loops.

- Train staff and incentivize learning.

- Monitor for bias and compliance issues.

- Iterate, iterate, iterate—never stand still.

The story doesn’t end here. Ongoing adaptation, critical thinking, and ethical vigilance are the only guarantees. Connect the dots back to that recruiter drowning in resumes—armed now with an AI-driven virtual assistant, they’re not just surviving. They’re redefining what it means to hire in a world where data, technology, and humanity collide.

Supplementary: Myths, misconceptions, and the real cost of inaction

Misconceptions holding your team back

The most damaging myths about AI-driven recruitment assistants aren’t just false—they’re dangerous. Believing that AI is instant, unbiased, or “set and forget” leads teams straight into cost overruns, compliance traps, and failed rollouts.

7 myths about AI in recruitment debunked:

- AI is always objective (data shows persistent bias).

- AI makes recruiters obsolete (human judgment is irreplaceable).

- Implementation is fast and painless (integration is the hardest part).

- All AI tools are the same (capabilities and risks vary wildly).

- AI never makes mistakes (see: algorithmic bias, data leaks).

- Candidates don’t care if hiring is automated (feedback shows otherwise).

- AI can fix flawed company culture (it amplifies, not corrects, cultural issues).

Communicating real value to skeptics means sharing data-driven wins, honest stories of challenge, and inviting cross-functional teams into the decision-making process.

The opportunity cost: What happens if you wait?

Slow adopters pay a steep price—lost talent, higher costs, and dwindling market share. A recent analysis shows that companies leveraging AI-driven virtual assistants for recruitment cut time-to-hire by 30-50% and cost-per-hire by up to 28% (see previous case study and SmartRecruiters, 2024). The longer you wait, the wider the gap grows.

| Scenario | Adopting AI Recruitment Assistant | Ignoring AI Recruitment Assistant |

|---|---|---|

| Time-to-hire | 27 days | 45+ days |

| Cost-per-hire | $4,400 | $6,100+ |

| Candidate engagement | High (real-time updates) | Low (delays, ghosting) |

| Quality-of-hire | Improved (data-driven screening) | Unchanged or declining |

| Competitive advantage | Significant | Eroding |

Table 6: Cost-benefit analysis of adopting vs. ignoring AI-driven recruitment assistants. Source: Original analysis based on SmartRecruiters, 2024

Competitors who leapfrog with AI don’t just fill roles faster—they snap up the best talent and leave slow movers scrambling.

Supplementary: Deep-dive on bias, transparency, and accountability

Understanding and minimizing AI bias in recruitment

Bias sneaks into AI models through tainted data, flawed algorithms, and unchecked automation. The process is insidious—if not aggressively managed, old prejudices get baked in at scale.

7 strategies for reducing bias in your recruitment AI tools:

- Regularly audit data sets for diversity and representativeness.

- Include humans in the loop to correct suspicious AI decisions.

- Retrain models with new, diverse data regularly.

- Test for disparate impact across demographic groups.

- Document and challenge all automated decision rules.

- Offer clear appeal processes for rejected candidates.

- Adopt industry frameworks for ethical AI deployment.

Diversity in training data and constant vigilance are non-negotiable for trustworthy AI.

Transparency: Demanding more from your AI partners

You wouldn’t hire a recruiter who can’t explain their logic—so why accept a black box AI? Transparency is now a baseline requirement.

Platforms leading the charge provide explainable AI—letting recruiters see why the machine made certain calls, and challenge or override as needed. Advocacy for transparency is a hallmark of reputable resources, including teammember.ai.

Key terms:

Systems whose decision logic is hidden or too complex for human understanding, creating trust and accountability risks.

Enabling users to understand and challenge the rationale behind AI decisions, vital for ethical recruitment.

A record of all actions and decisions by the AI assistant—crucial for compliance, learning, and accountability.

Conclusion

AI-driven virtual assistants for recruitment aren’t some distant, speculative technology—they’re remaking hiring right now. But the brutal truth is this: behind every promise lies a set of urgent questions recruiters, business leaders, and candidates can’t afford to ignore. The best outcomes come to those who cut through the hype, build ethical and transparent systems, and never stop challenging both machine and human judgment. As research and real-world data prove, waiting on the sidelines is the riskiest move of all. The new recruitment arms race has started—are you ready to fight smart, or just fight to keep up?

Sources

References cited in this article

- SmartRecruiters(smartrecruiters.com)

- DemandSage(demandsage.com)

- Forbes(forbes.com)

- Randstad(randstad.com)

- Carv(carv.com)

- BusinessResearchInsights(businessresearchinsights.com)

- Metapress(metapress.com)

- Mordor Intelligence(mordorintelligence.com)

- MarketResearchFuture(marketresearchfuture.com)

- TechFunnel(techfunnel.com)

- Psico-smart(psico-smart.com)

- SmartDreamers(smartdreamers.com)

- Carv(carv.com)

- RecruitBPM(recruitbpm.com)

- Leoforce(leoforce.com)

- HackerRank(hackerrank.com)

- Tengai(tengai.io)

- Gartner(psico-smart.com)

- Phenom(phenom.com)

- BuildPrompt(buildprompt.ai)

- Vultus(vultus.com)

- Forbes(forbes.com)

- Emerald Insight(emerald.com)

- Cornell Chronicle(news.cornell.edu)

- All Things Talent(allthingstalent.org)

- AutoGPT(autogpt.net)

- HeroHunt(herohunt.ai)

- Forbes Council(forbes.com)

Be First to Try Your AI Team Member

Every week without automation is dozens of hours lost to operational work. Hours you'll never get back. Join the waitlist and get priority access.

More Articles

Discover more topics from AI Team Member

AI-Driven Virtual Assistants: Productivity Gains or Just More Noise?

Uncover the real impact, hidden costs, and future-proof strategies in 2026. Are you ready to work smarter?

AI-Driven Virtual Assistant for Personalized, Trustworthy Communication

Personalized communication isn’t a luxury anymore—it’s the new baseline. Enter the AI-driven virtual assistant for personalized communication: a seismic force

AI-Driven Virtual Assistant for Performance Management: Boon or Threat?

Unmask the real benefits, hidden risks, and future impact. Get the insider’s guide to making AI work for your team today.

AI-Driven Virtual Assistant for Online Scheduling, Demystified

AI-driven virtual assistant for online scheduling exposes myths and delivers real productivity gains—discover the edgy truths, pitfalls, and best practices now.

AI-Driven Virtual Assistant for Online Support: Hype Vs Hard ROI

Discover insights about AI-driven virtual assistant for online customer support

AI-Driven Virtual Assistant for Online Businesses: Hype Vs ROI

Discover the raw truths, real risks, and actionable wins to transform your workflow in 2026. Don’t fall for the hype.

AI-Driven Virtual Assistant for Onboarding Processes, De-Risked

Discover the edgy, data-backed guide HR leaders crave. Uncover hidden truths, avoid costly mistakes, and future-proof your onboarding—now.

AI-Driven Virtual Assistant for Natural Language Work, Not Chat

AI-driven virtual assistant for natural language understanding delivers game-changing productivity, breaking barriers in real-world communication. Discover the truth.

AI-Driven Virtual Assistant for Milestone Management That Exposes Risk

AI-driven virtual assistant for milestone management exposes hidden workflow flaws and unlocks next-level productivity. Discover what top teams won't tell you.